Mark Cuban posted a question this morning that landed harder than most of the AI takes circulating in 2026. He framed it as the central blocker for enterprise AI adoption, and he is largely correct — though his framing collapses two distinct problems into one, and the engineering answer is more interesting than the meme it is becoming.

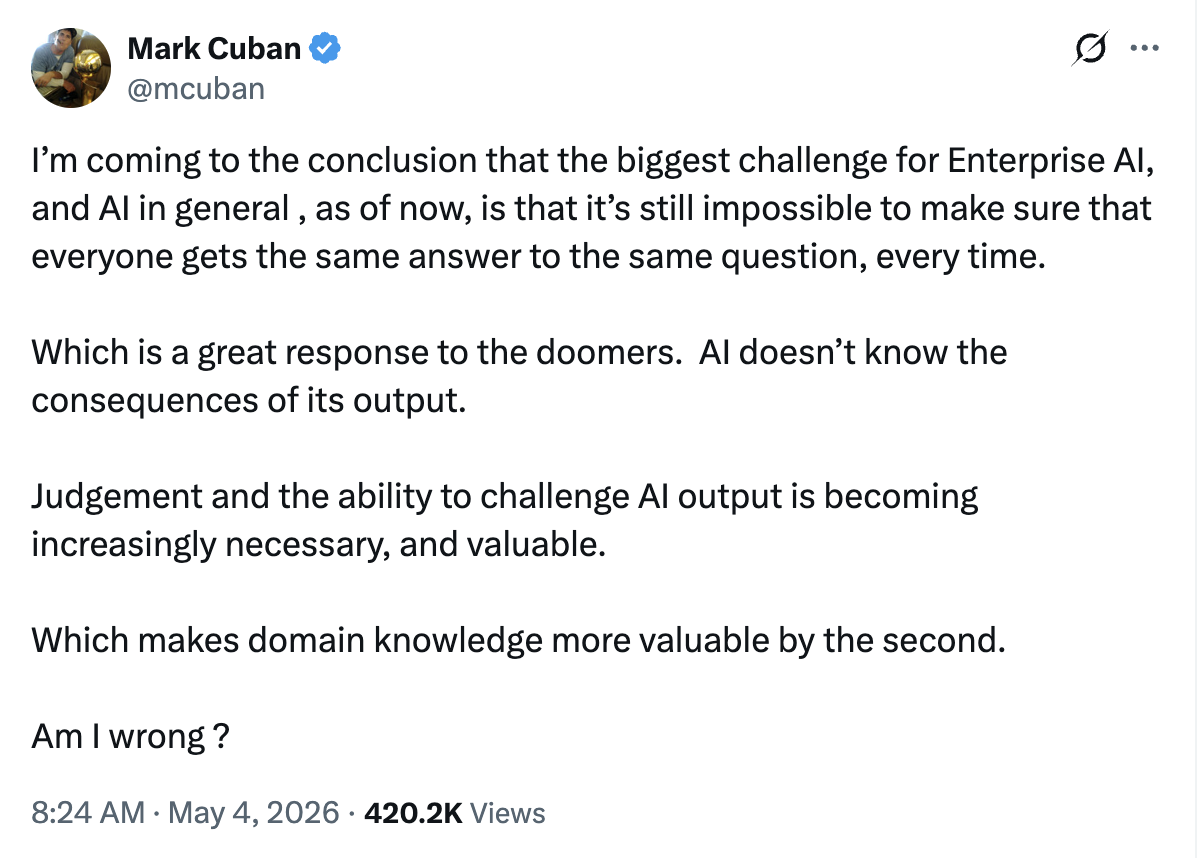

His tweet:

Quoted in full:

He is not wrong. He is also not entirely right. The shape of the actual problem matters because it determines what enterprises should be building, and most of the post-Cuban commentary is going to recommend the wrong fix.I'm coming to the conclusion that the biggest challenge for Enterprise AI, and AI in general, as of now, is that it's still impossible to make sure that everyone gets the same answer to the same question, every time. Which is a great response to the doomers. AI doesn't know the consequences of its output. Judgement and the ability to challenge AI output is becoming increasingly necessary, and valuable. Which makes domain knowledge more valuable by the second.

Am I wrong? — Mark Cuban (@mcuban), 8:24 AM · May 4, 2026

What is the determinism gap?

The determinism gap is the difference between traditional enterprise software, which produces identical output for identical input, and large language models, which produce probabilistic output that can vary across runs even when temperature is set to zero. The gap is real but not uniform — some AI surfaces require deterministic output (medical records, contract terms, regulated lookups), while others depend on probabilistic variation as the product feature (chat tone, ideation, marketing copy). The architectural answer is not to make all AI deterministic but to classify each surface and build validator infrastructure that enforces determinism only where it is mandatory.

A SQL query against a relational database with the same inputs returns the same rows every time. That guarantee is the foundation of every enterprise control system built since the 1980s — financial reconciliation, audit trails, compliance attestations, regulatory reporting. The entire infrastructure of trust in business software rests on the assumption that X always equals Y.

Large language models do not offer that guarantee. Even at temperature zero, the underlying token-prediction architecture, sampling implementation details, and infrastructure-level non-determinism (GPU floating-point ordering, parallel decoding heuristics) produce subtle variation across runs. Across providers, across model versions, across hardware tiers, the variation compounds. An enterprise treating an LLM call like a database query is making a category error that will eventually generate a compliance incident, a customer-service contradiction, or a regulatory finding.

Cuban's framing is the experience of every Chief Risk Officer who has tried to put an LLM in front of a binding business workflow. The fact that this is the meme of the week tells you the conversation has finally caught up to the architecture.

Where Cuban's framing breaks down

The framing breaks down at the word "everyone."

Not every AI surface needs to give everyone the same answer to the same question. The premise quietly assumes that all AI workloads are interchangeable with database queries, and that variation across runs is universally a defect. That is not the right test for half of the productive uses of generative AI.

- A regulated-lookup surface — what is the recommended dosage of this medication for a patient with this combination of conditions and allergies? Determinism is mandatory. Variation is malpractice.

- A contract-clause surface — does this MSA include a limitation-of-liability clause that aligns with our 2026 standard, and if not, what should the redline look like? Determinism is mandatory. Variation is legal risk.

- A customer-service answer surface — what is the return policy on items purchased during the holiday window? Determinism is mandatory at the policy level, but the phrasing of the answer can vary as long as the substance is consistent. Constrained variation is acceptable.

- A persona-driven concierge chat surface — how does our brand voice answer a question about preventative dental care? Probability is the feature. Eight tenants on the same widget should not produce eight identical answers; the persona is the product.

- A creative ideation surface — give me ten distinct angles for a product launch announcement, ranked by predicted resonance with our existing customer cohort. Probability is the entire output. Determinism would mean the tool produces ten copies of the same idea.

The right question is not "can we make AI deterministic." The right question is per surface: does this need determinism, or does it need bounded probability, and what infrastructure enforces the choice?

What validator architecture actually looks like

Validator architecture is a software pattern in which AI-generated output passes through one or more deterministic gates before it can take consequential action. The gates can be schema validators, substring or regex matchers, structural rule evaluators, threshold scorers, or secondary AI critics. The pattern decouples generation (probabilistic, fast, creative) from validation (deterministic, slower, conservative) and treats validation as a first-class engineering surface. Properly implemented, it allows enterprises to deploy AI in regulated workflows without giving up the safety properties of traditional software.

The pattern has five repeating shapes. We see all five in production today across our deployments and our clients' systems.

Schema gates. Any AI output that has to be machine-readable downstream — a lead-form submission, a structured medical note, a calendar invite, a tool-call argument — should pass through a schema validator before it reaches the downstream system. The model produces JSON; the schema rejects malformed payloads with a specific error; the system either retries with the error context or hands off to a human queue. This is the cheapest, highest-leverage validator pattern.

Every enterprise AI deployment should have this layer.

Substring and pattern gates. Some failure modes are best caught with a precommitted pattern match before the AI gets near the response. In our concierge widget deployments, an emergency phrase like "if this is a medical emergency" or a 911 reference fires a deterministic routing path *before* the language model gets a turn — the user sees the emergency-routing UI, not whatever the LLM might have said. The substring is the kill switch; the LLM is the conversation.

Structural rule evaluators. When the validation criteria are complex but precommitted — does this generated post have an atomic answer block, at least two H2 headings, an SEO description in the 120-160 character window, and a word count above 800? — a deterministic rule evaluator is dramatically more reliable than asking another LLM. The rules are tunable, version-controlled, and produce identical scores for identical input.

We use this pattern in our content pipeline today; it is what gates whether a draft advances from draft to deploy stage.

Threshold scorers. When you can compute a numeric quality score from the output, set a per-tenant threshold and gate on it. Drafts above the threshold advance; drafts below it are held for review. The threshold is a knob, not a hardcoded value, and different tenants can run different thresholds for different stages of program maturity.

Secondary AI critics — bounded. When the validation question is genuinely subjective ("does this clinical note read naturally to an English-speaking clinician?") a second LLM in a critic role is reasonable. The trap is the hallucination echo chamber — if the generator and the critic share the same weights and training data, they often agree on plausible-sounding nonsense.

The mitigation is to give the critic an *immutable ground-truth source* (a schema, a fact database, a reference set) and to evaluate the critic's effectiveness against an independent human-labeled benchmark. A critic without ground truth is just a co-conspirator.

These five shapes — schemas, patterns, structural rules, threshold scorers, bounded critics — are not a complete catalog. They are the load-bearing primitives we have shipped repeatedly into production across regulated industries. Every enterprise AI surface we have audited could use more of all five, and we have rarely seen a buyer who could not tell the difference once we pointed it out.

How this is shipped today, in production

The architecture is not theoretical. Several patterns described above are running on real customer infrastructure right now.

A content generation pipeline we operate produces drafts through a Bedrock Claude call, persists them to DynamoDB, and routes them through a separate quality-check Lambda before they advance toward deployment.

The quality-check Lambda is the generator/critic pattern in production: it reads the structured envelope produced by the writer, evaluates atomic-answer presence, FAQ count, SEO field length, word count, and other structural rules, and either advances the draft to the deploy stage, holds it for editorial review, or kills it as structurally broken. The drafts an LLM produces vary; the gate they pass through does not.

A concierge widget we operate across multiple customer sites — including tenants in healthcare, legal, and consumer-services verticals — uses precommitted emergency-phrase routing as a hard gate. The substring match runs before the language model returns its first token. A user typing "if this is a medical emergency" never sees an LLM-generated response to that question; they see a deterministic emergency-routing UI with the actual phone number for the practice.

The model is not trusted with a life-safety decision because the architecture refuses to delegate one to it.

A lead-capture path that flows from those concierge widgets into our central tenant orchestration platform validates the inbound payload against an explicit schema. A request with a missing or malformed tenant field is rejected at the API boundary with a 400, never reaches DynamoDB, and never produces a downstream record that has to be reconciled later. The schema is the gate; the LLM is upstream of it.

A blog post we published on April 28 reached source-citation status in Google's AI Overview within five days, alongside Gemini and ChatGPT mobile placements that named us by category. Per our editorial policy, we do not edit pages that have reached citation-window status during the 30-60 day re-evaluation period — edits trigger re-crawl, which can destabilize the citation.

That is a temporal determinism gate over a probabilistic system (search engines), and it exists because we have measured what happens when you violate it.

These are real systems shipping real work. The validator pattern is not an architectural diagram on a slide. It is an operational discipline that compounds over time, deployment by deployment.

Why bootstrapped firms ship validator infrastructure faster

There is a pattern visible across the enterprise AI vendor landscape that deserves explicit naming.

The firms with the most ambitious AI demos tend to also have the largest fundraising rounds, the largest sales teams, and the largest gap between demo and production. The firms shipping validator infrastructure — the unsexy plumbing that determines whether an AI deployment survives a regulatory audit — tend to be smaller, more constrained, and more disciplined about what they ship.

This is not a coincidence. Validator infrastructure is hard to demo. It does not produce the kind of screenshot that drives a Series A round. It produces a quiet, boring stability profile that becomes obvious after the third or fourth time the deployment passes a compliance review without intervention.

Bootstrapped firms operating in mid-market and enterprise regulated industries have an advantage here, because they cannot afford the cost of a deployment that fails its first compliance review. Their incentive structure forces them to build the validator layer before they build the demo. By the time they have customers in production, the validator architecture is already load-bearing infrastructure rather than retrofit.

We have written elsewhere about the three pillars of production AI — Intelligence Core, Discovery and Authority, Operational Excellence — and how the gap between pilot and production is rarely a model problem. It is almost always a missing infrastructure layer. Cuban's tweet describes the symptom. The architectural answer has been sitting in production at boutique firms for the past eighteen months.

If you are evaluating an AI vendor and your conversation never moves past model selection, prompt engineering, and "how creative can it be," you are evaluating a demo, not a deployment. Ask about their validator architecture. If they cannot describe it concretely, in terms of named gates and named failure modes, they have not built one.

What this means for buyers

For enterprise AI buyers, the determinism gap reframes the procurement conversation. The right diagnostic question is not "how accurate is your AI?" but "what gates does your AI output pass through before it reaches a customer or a database?" Vendors that can answer with named, versioned, deterministic validators have built defensible production systems. Vendors that cannot are selling demos, regardless of the underlying model quality. Buyers should expect to see schema validation, pattern gates, structural rule evaluators, and threshold scorers as line-item architectural components of any AI deployment they sign for.

If you are a CIO, CTO, or Chief Risk Officer evaluating an enterprise AI vendor in 2026, the right diagnostic move is to walk through every surface where the AI's output reaches a customer, a database, an external API, or a system of record. For each surface, ask three questions:

- What is the output that surface produces?

- What deterministic gate does that output pass through before it takes effect?

- If the gate fails, what happens — does the system block, retry, fall back to a human, or silently log?

The same diagnostic applies in reverse if you are evaluating your own internal AI deployments. Most enterprises we audit have at least one surface where the AI output flows directly into a system of record with no validator gate. That is the deployment most likely to produce the compliance incident that ends the AI program.

It is also the deployment most likely to be running on the most sophisticated model the vendor offers — because the model was the focus of the build, and the gate was deferred.

Cuban's tweet is going to drive a quarter of conversations like this. The vendors who win the next eighteen months will be the ones who already have the answer.

The bottom line

Cuban's framing — that the determinism gap is the central enterprise AI problem and that judgment and domain knowledge are now the bottleneck — is essentially right at the level of business consequence. It is incomplete at the level of architecture, because the gap is not uniform across surfaces and the engineering answer is not "make AI deterministic" but "build validator infrastructure that enforces determinism only where it belongs."

The firms that will compound advantage over the next eighteen months are the ones that have classified every AI surface in their stack into deterministic-required or bounded-probabilistic, built the right gate for each, and made the gate a versioned, observable, testable production component rather than an afterthought.

This is not the most photogenic part of an AI program. It is the part that survives an audit.

Next steps

If you are responsible for an AI deployment that touches customer data, regulated workflows, or systems of record, the fastest way to see where you stand is to run a structural audit of the validator gates already in place. We do this as a focused engagement: we walk every surface, classify it, identify the missing gates, and deliver an engineering-grade gap report with named remediations.

For a starting point on the broader machine-legibility stack — schema, atomic architecture, llms.txt, semantic HTML — our AEO infrastructure service is the canonical $1,450 build. It is the foundation under any validator architecture, because the same atomic structure that drives AI citations is what your validators will check against.

To see what validator architecture looks like in our own production infrastructure, the Sentinel ops layer is the showroom: a fleet of investigate-only AI agents running on AWS Bedrock that monitor our own systems for failure modes, file tickets, and notify the right channel.

Three production workloads run today — a Diagnostics Agent for tenant uptime forensics, a GH Triage Agent for CI failure classification, and a Pipeline Hang Detector for content-pipeline anomalies — and the same architecture is available to clients as a Sentinel-pattern monitoring retainer running against the buyer's own AWS Bedrock account. Every workload follows the same shape — signal, detector, structured tool calls, schema-validated ticket, deterministic notification — and every workload is auditable end to end.

For the deepest cut, contact our team and we will work through your specific deployment surfaces with you. The validator architecture conversation is the one most enterprise AI vendors are not ready to have. We are.